Bringing a Blurry Frame Alive at High Frame-Rate with an Event Camera

L. Pan, C. Scheerlinck, X. Yu, R. Hartley, M. Liu, Y. Dao

Conference on Computer Vision and Pattern Recognition (CVPR; ORAL accept. rate 6%), 2019

PDF Video Code Data Slides BibTex

CVPR talk here

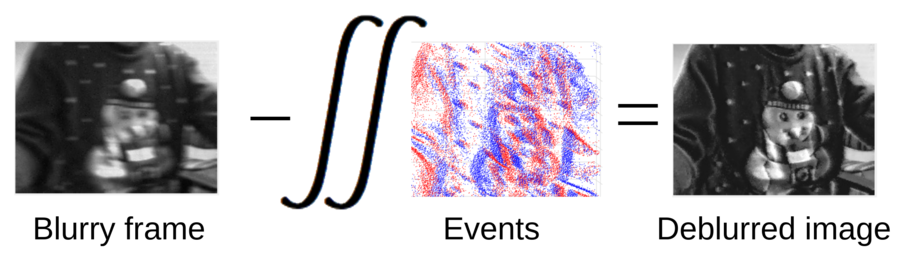

Abstract. Event-based cameras can measure intensity changes (called ‘events’) with microsecond accuracy under high-speed motion and challenging lighting conditions. With the active pixel sensor (APS), the event camera allows simultaneous output of the intensity frames. However, the output images are captured at a relatively low frame-rate and often suffer from motion blur. A blurry image can be regarded as the integral of a sequence of latent images, while the events indicate the changes between the latent images. Therefore, we are able to model the blur-generation process by associating event data to a latent image. In this paper, we propose a simple and effective approach, the Event-based Double Integral (EDI) model, to reconstruct a high frame-rate, sharp video from a single blurry frame and its event data. The video generation is based on solving a simple non-convex optimization problem in a single scalar variable. Experimental results on both synthetic and real images demonstrate the superiority of our EDI model and optimization method in comparison to the state-of-the-art.

CVF Open Access

Reference:

- L. Pan, C. Scheerlinck, X. Yu, R. Hartley, M. Liu, Y. Dao “Bringing a Blurry Frame Alive at High Frame-Rate with an Event Camera”, Conference on Computer Vision and Pattern Recognition (CVPR), 2019, pp. 6820-6829.